dotCMS Enterprise Cloud (/cms-platform/features) support multi-node clusters in load balanced, round-robin or hot standby configurations.

This document describes the common configuration required setting up a dotCMS cluster. Please review the following sections before performing your clustering configuration:

Initial Setup

The following resources are shared among all servers in the cluster, and must be set up for all clustering configurations. These configuration steps must be completed before implementing the steps for either Auto-clustering or Manual Cluster Configuration.

1. Common Location

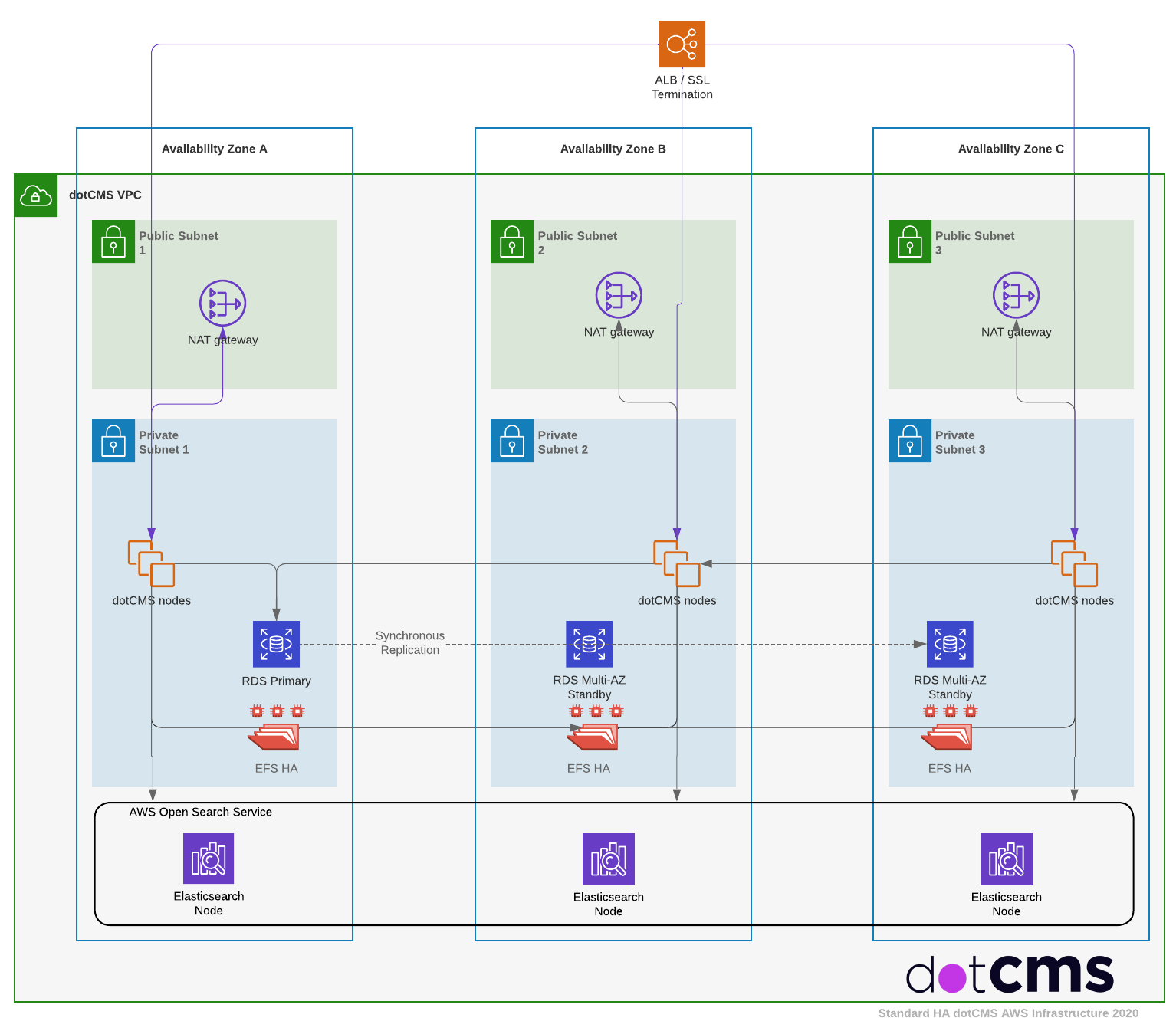

Clustering in dotCMS is designed to be used in a single location/datacenter with low network latency between servers. In the cloud, this means that in a dotCMS cluster, dotCMS nodes can span multiple availability zones but generally should not span regions. Clusters require significant inter-node communications, and having nodes in different regions can negatively affect performance and reliability of the cluster due to network latency or communication failures.

Nodes Spanning Multiple Locations

Push Publishing is the recommended and fully supported solution to span multiple locations.

Although some other methods can be implemented to connect nodes spanning multiple locations, these require custom configuration and support. If you would like more information on other methods of spanning nodes in different physical locations (such as clusters with nodes in different data centers or on separate networks), please contact dotCMS Support for assistance.

2. Shared Database and Elasticsearch

In order to cluster dotCMS, you must first create and set up your initial database and your elasticsearch cluster. Though caches are stored separately on each node in the cluster, all nodes in a cluster connect to the same centralized databases and elasticsearch clusters in order to sync data across the cluster.

3. Load Balancer

Additionally, you will need a load balancer that has sticky session support enabled and running in front of your dotCMS instances.

4. Shared Assets Directory

dotCMS requires a network share or NAS that shares the contents of the assets directory across all nodes in the cluster. In a clustered dotCMS system, you should configure the path to your assets by setting the ASSET_REAL_PATH variable in the dotmarketing-config.properties file or by setting the DOT_ASSET_REAL_PATH environmental variable before starting dotCMS.

5. (Optional) Sharing OSGi Plugin Directories

You can share your OSGi plugins and deploy and undeploy them across the whole cluster at the same time, from any node. To do this, you must use the Shared Assets Directory for 2 of the OSGi folders. Each server in the cluster monitors these shared folders and deploys/un-deploys any OSGi jars found there.

Inside the Shared Asset Directory, create folders named /felix/load and /felix/undeployed.

- e.g., dotCMS/assets/felix/load and dotCMS/assets/felix/undeployed.

Replace the server local OSGi folders under WEB-INF with symlinks to these shared folders, so you would have

- dotCMS/WEB-INF/felix/load sym link to —> dotCMS/assets/felix/load

- dotCMS/WEB-INF/felix/undeployed sym link to —> dotCMS/assets/felix/undeployed

Note: If you share your plugins across all nodes in your cluster, each node will try run the code in the plugins Activator class simultaneously. It is important to know this when doing “set up type work” in the plugins Activator.

Testing your Cluster

Test your cache cluster startup

- Shut down and restart 1 node in the cluster.

- Open the log file for the restarted node and search for “ping”.

Result: When you restart the node, it should “ping” the other servers in the cluster, and you should see the results of those pings in the dotcms.log file. If you do not see “ping” on the other servers in the cluster, then your cluster cache settings are incorrect.